It’s Earth Day, so time for a brief break from my laserlike, day-long focus on complexity theory, and for my long-promised post about climate change.

Let me lay my cards on the table. I think that we’re in the same position with climate change today that we were with Hitler in 1938. That position, in case you’re wondering, is on the brink of a shitstorm. And as with the lead-up to that earlier shitstorm, some people are sanely worried, some are in active denial, and the rest are in “passive denial” — accepting the obvious if pressed, but preferring to think about more pleasant things like NP intersect coNP. It’s frustrating even to have to defend the “worried” view explicitly, since it’s so clear which way the debate will have been settled 50 years from now.

At the same time, I can’t ignore that there are thoughtful, humane, intelligent people — just like there were in the 1930’s — who downplay, equivocate over, and rationalize away the shitstorm that (again from my perspective) is gathering over our heads.

After all, isn’t the climate change business more complicated than all that? Do we even know the Earth is getting warmer? Okay, so maybe we do know, but do we really know why? Couldn’t it just be a coincidence that we’re pumping out billions of tons of CO2 and methane each year, and 19th-century physics tells us that will make the temperature rise, and the temperature is in fact rising as predicted? What about feedbacks like cloud cover, ocean absorbtion, and ice caps? And sure, maybe the feedbacks could at most buy a few decades, and maybe some of them (like melting ice caps darkening the Earth’s surface) are rapidly making things worse rather than better, but even so, wouldn’t the loss of some low-lying countries be more than balanced out by warmer winters in Ontario? And granted, maybe if our goal was to run a massive, irreversible geophysics experiment on an entire planet, it might be smarter to start with (say) Venus or Mars instead of Earth, but still — wouldn’t it be easier to adapt to a climate unlike any the planet has experienced in the last 200 million years than to drive Priuses instead of Cherokees? Isn’t it just a question of how to allocate resources, of how to maximize expected utility? And aren’t there other risks we should be more worried about, like bird flu, or out-of-control nanorobots converting the planet into grey goo?

I’ll tackle some of these questions in future posts or comments — though for most of them, the professionals at RealClimate can do a better job than I can. Today I want to try a different tack: flying over most of this well-worn ground, and aiming immediately for the one place where the climate skeptics invariably end up anyway when all of their other arguments have been exhausted. That place is the Chicken Little Argument.

“Back in the 1970’s, all you academics were screaming about overpopulation, and the oil shortage, and global cooling. That’s right, cooling: the exact opposite of warming! And before that it was radiation poisoning, or an accidental nuclear launch, and before that probably something else. Yet time after time, the doomsayers were wrong. So why should this time be any different? Why should ours be the one time when the so-called crisis is real, when it’s not a figment of a few scientists’ overheated imaginations?”

The first response, of course, is that sometimes the alarmists were right. More than once, our civilization really did face an existential threat, only to escape it by a hair. I already mentioned Hitler, but there’s another example that’s closer to the subject at hand.

In the 1970’s, Mario Molina and F. Sherwood Rowland realized that chlorofluorocarbons, then a common refrigerant, propellant, and cleaning solvent, could be broken down by UV light into compounds that then attacked the ozone molecules in the upper atmosphere. Had the resulting loss of ozone continued for much longer, the increased UV light reaching the Earth’s surface would eventually have decimated populations of plankton and cyanobacteria, which in turn could have destabilized much of the world’s food chain.

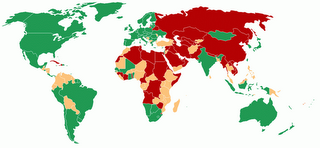

As with global warming today, the initial response of the chemical companies was to attack the ivory-tower, tree-hugging, funding-crazed, Cassandra-like messenger. But in 1985, Joseph Farman, Brian Gardiner, and Jonathan Shanklin looked into a weird error in ozone measurements over Antarctica, which seemed to show more than half the ozone there disappearing from September to December. When it turned out not to be an error, even Du Pont decided that planetary suicide wasn’t in its best interest, and CFC’s were phased out in most of the world by 1996. We survived that one.

But there’s a deeper response to the Chicken Little Argument, one that goes straight to the meat of the issue (chicken, I suppose). This is that, when we’re dealing with “indexical” questions — questions of the form “why us? why were we born in this era rather than a different one?” — we can’t apply the same rules of induction that work elsewhere.

To illustrate, consider a hypothetical planet where the population doubles every generation, until it finally depletes the planet’s resources and goes extinct. (Like bacteria in a petri jar.) Now imagine that in every generation, there are doomsayers preaching that the end is nigh, who are laughed off by folks with more common sense. By assumption, eventually the doomsayers will be right — their having been wrong in the past is just a precondition for there being a debate in the first place. But there’s a further point. If you imagine yourself chosen uniformly at random among all people ever to live on the planet, then with about 99% probability, you’ll belong to one of the last seven generations. The assumption of exponential growth makes it not just possible, but probable, that you’re near the end.

That’s one formulation (though not the best one) of the infamous Doomsday Argument, which says (roughly speaking) that the probability of human history continuing for millions of years longer is less than one would naïvely expect, since if it did so continue, then we would occupy an improbable position near the very beginning of that history. Obviously cavemen could have made the same argument, and they would have been wrong. The point is that, if everyone in history makes the Doomsday Argument, then most people who make it (or a suitable version of it) will by definition be right.

On hearing the Doomsday Argument for the first time, almost everyone thinks there must be a fallacy somewhere. But once you accept one key assumption, the Argument is a trivial consequence of Bayes’ Rule. So what is that key assumption? It’s what Nick Bostrom, in one of the only metaphysical page-turners ever written, calls the Self-Sampling Assumption (SSA). The SSA states that, if you consider a possible history of the world to have a prior probability p, and if that history contains N>0 people who you imagine you “could have been,” then you should judge the probability of your being a specific one of those people within that history to be p/N. Sound obvious? Well, you might imagine instead that you need to weight the probability of each history by the number of people in it — so that, if a history has ten times as many people who you “could have been,” then you would be ten times as likely to exist in that history in the first place. Bostrom calls this alternative the Self-Indication Assumption (SIA).

It’s not hard to show that switching from SSA to SIA exactly cancels out the effect of the Doomsday Argument — bringing you back to your “naïve” prior probabilities for each possible history. In short, if you accept SSA then the Doomsday Argument goes through, while if you accept SIA then it doesn’t.

But before you buy that “SIA not SSA” bumper-sticker for your SUV, let me point out the downsides. Firstly, SIA forces you to treat your own existence as a random variable — not as something you can just condition on! Indeed, the image that springs to mind is that of a warehouse full of souls, not all of which will get “picked” to inhabit a body. And secondly, assuming it’s logically possible for there to be a universe with an infinite number of people, SIA implies that we must live in such a universe. Usually, if you reach a definite empirical conclusion starting from pure thought, your best bet is to look around you. You might find yourself in a medieval monastery or an Amsterdam coffeeshop.

On the other hand, as Bostrom observed, the SSA carries some heavy baggage of its own. For example, it suggests the following “algorithm” by which the first people ever to live, call them (I dunno) “Adam” and “Eve,” could solve NP-complete problems in polynomial time. They simply guess a random solution, having formed the firm intention to

- have children (leading eventually to an exponential number of descendants) if the solution is wrong, or

- have no children if the solution is right.

(For this algorithm, it really does have to be “Adam and Eve, not Adam and Steve.”) Here’s the punchline: the prior probability of Adam and Eve’s choosing a wrong solution is close to 1, but under SSA, the posterior probability is close to 0. For if Adam and Eve guess a wrong solution, then with overwhelming probability they wouldn’t be Adam and Eve to begin with — they would be one of the numerous descendants thereof.

Indeed, there’s a loony, crackpot paper showing that if Adam and Eve had a quantum computer, then they could even solve PP-complete problems in polynomial time. Every day I’m dreading the Exxon ad: “If the assumptions underlying the Doomsday Argument were valid, it’s not just that Adam and Eve could solve NP-complete problems in polynomial time. Modulo a plausible derandomization assumption, a theorem of S. Aaronson implies they could decide the entire polynomial hierarchy! So go ahead, buy that monster SUV.”

If this discussion seems hopelessly speculative, well, that’s exactly the point. The Doomsday Argument is hopelessly speculative, but not more so than the Chicken Little Argument. Ultimately, both arguments rest on metaphysical assumptions about “why we’re us and not someone else” — about the probability of having been born into one historical epoch rather than another. This is not the sort of question that science gives us the tools to answer.

For me, then, the Doomsday Argument is like an ethereal missile that neutralizes the opposing missile of the Chicken Little Argument — leaving the ground troops below to slog it out based on, you know, actual facts and evidence. So I think the environmentalists’ message to the climate contrarians should be as follows: if you stick to the science, then we will too. But if you fall back on your favorite lazy meta-argument — “why should the task of saving the world have fallen to this generation, and not to some other one?” — then don’t be surprised to find that metareasoning cuts both ways.

Follow

Follow