Scott Aaronson’s Brief Foreword:

Harvey Lederman is a distinguished analytic philosopher who moved from Princeton to UT Austin a few years ago. Since his arrival, he’s become one of my best friends among the UT professoriate. He’s my favorite kind of philosopher, the kind who sees scientists as partners in discovering the truth, and also has a great sense of humor. He and I are both involved in UT’s new AI and Human Objectives Initiative (AHOI), which is supported by Open Philanthropy.

The other day, Harvey emailed me an eloquent meditation he wrote on what will be the meaning of life if AI doesn’t kill us all, but “merely” does everything we do better than we do it. While the question is of course now extremely familiar to me, Harvey’s erudition—bringing to bear everything from speculative fiction to the history of polar exploration—somehow brought the stakes home for me in a new way.

Harvey mentioned that he’d sent his essay to major magazines but hadn’t had success. So I said, why not a Shtetl-Optimized guest post? Harvey replied—what might be the highest praise this blog has ever received—well, that would be even better than the national magazine, as it would reach more relevant people.

And so without further ado, I present to you…

ChatGPT and the Meaning of Life, by Harvey Lederman

For the last two and a half years, since the release of ChatGPT, I’ve been suffering from fits of dread. It’s not every minute, or even every day, but maybe once a week, I’m hit by it—slackjawed, staring into the middle distance—frozen by the prospect that someday, maybe pretty soon, everyone will lose their job.

At first, I thought these slackjawed fits were just a phase, a passing thing. I’m a philosophy professor; staring into the middle distance isn’t exactly an unknown disease among my kind. But as the years have begun to pass, and the fits have not, I’ve begun to wonder if there’s something deeper to my dread. Does the coming automation of work foretell, as my fits seem to say, an irreparable loss of value in human life?

The titans of artificial intelligence tell us that there’s nothing to fear. Dario Amodei, CEO of Anthropic, the maker of Claude, suggests that: “historical hunter-gatherer societies might have imagined that life is meaningless without hunting,” and “that our well-fed technological society is devoid of purpose.” But of course, we don’t see our lives that way. Sam Altman, the CEO of OpenAI, sounds so similar, the text could have been written by ChatGPT. Even if the jobs of the future will look as “fake” to us as ours do to “a subsistence farmer”, Altman has “no doubt they will feel incredibly important and satisfying to the people doing them.”

Alongside these optimists, there are plenty of pessimists who, like me, are filled with dread. Pope Leo XIV has decried the threats AI poses to “human dignity, labor and justice”. Bill Gates has written about his fear, that “if we solved big problems like hunger and disease, and the world kept getting more peaceful: What purpose would humans have then?” And Douglas Hofstadter, the computer scientist and author of Gödel, Escher, Bach, has spoken eloquently of his terror and depression at “an oncoming tsunami that is going to catch all of humanity off guard.”

Who should we believe? The optimists with their bright visions of a world without work, or the pessimists who fear the end of a key source of meaning in human life?

I was brought up, maybe like you, to value hard work and achievement. In our house, scientists were heroes, and discoveries grand prizes of life. I was a diligent, obedient kid, and eagerly imbibed what I was taught. I came to feel that one way a person’s life could go well was to make a discovery, to figure something out.

I had the sense already then that geographical discovery was played out. I loved the heroes of the great Polar Age, but I saw them—especially Roald Amundsen and Robert Falcon Scott—as the last of their kind. In December 1911, Amundsen reached the South Pole using skis and dogsleds. Scott reached it a month later, in January 1912, after ditching the motorized sleds he’d hoped would help, and man-hauling the rest of the way. As the black dot of Amundsen’s flag came into view on the ice, Scott was devastated to reach this “awful place”, “without the reward of priority”. He would never make it back.

Scott’s motors failed him, but they spelled the end of the great Polar Age. Even Amundsen took to motors on his return: in 1924, he made a failed attempt for the North Pole in a plane, and, in 1926, he successfully flew over it, in a dirigible. Already by then, the skis and dogsleds of the decade before were outdated heroics of a bygone world.

We may be living now in a similar twilight age for human exploration in the realm of ideas. Akshay Venkatesh, whose discoveries earned him the 2018 Fields Medal, mathematics’ highest honor, has written that, the “mechanization of our cognitive processes will alter our understanding of what mathematics is”. Terry Tao, a 2006 Fields Medalist, expects that in just two years AI will be a copilot for working mathematicians. He envisions a future where thousands of theorems are proven all at once by mechanized minds.

Now, I don’t know any more than the next person where our current technology is headed, or how fast. The core of my dread isn’t based on the idea that human redundancy will come in two years rather than twenty, or, for that matter, two hundred. It’s a more abstract dread, if that’s a thing, dread about what it would mean for human values, or anyway my values, if automation “succeeds”: if all mathematics—and, indeed all work—is done by motor, not by human hands and brains.

A world like that wouldn’t be good news for my childhood dreams. Venkatesh and Tao, like Amundsen and Scott, live meaningful lives, lives of purpose. But worthwhile discoveries like theirs are a scarce resource. A territory, once seen, can’t be seen first again. If mechanized minds consume all the empty space on the intellectual map, lives dedicated to discovery won’t be lives that humans can lead.

The right kind of pessimist sees here an important argument for dread. If discovery is valuable in its own right, the loss of discovery could be an irreparable loss for humankind.

A part of me would like this to be true. But over these last strange years, I’ve come to think it’s not. What matters, I now think, isn’t being the first to figure something out, but the consequences of the discovery: the joy the discoverer gets, the understanding itself, or the real life problem their knowledge solves. Alexander Fleming discovered penicillin, and through that work saved thousands, perhaps millions of lives. But if it were to emerge, in the annals of an outlandish future, that an alien discovered penicillin thousands of years before Fleming did, we wouldn’t think that Fleming’s life was worse, just because he wasn’t first. He eliminated great suffering from human life; the alien discoverer, if they’re out there, did not. So, I’ve come to see, it’s not discoveries themselves that matter. It’s what they bring about.

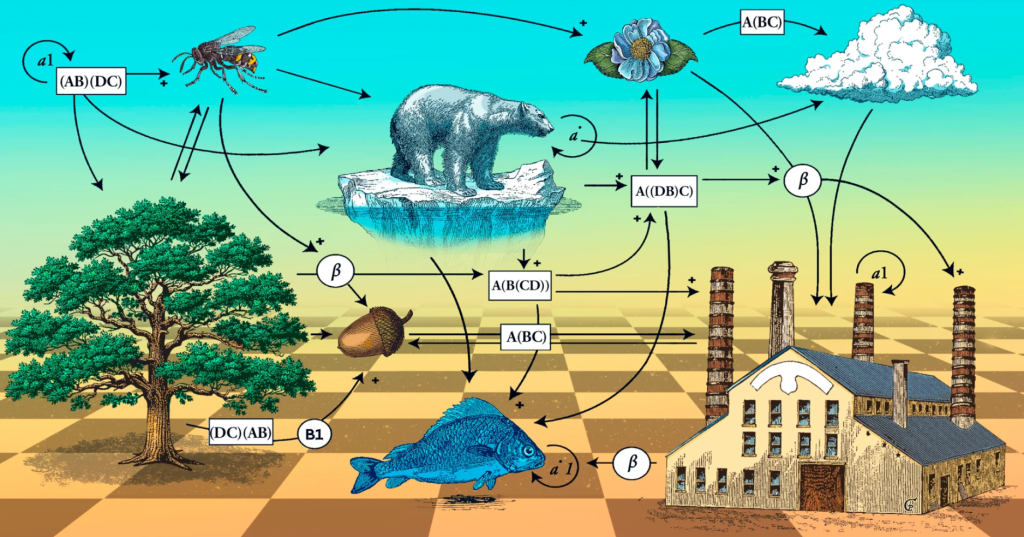

But the advance of automation would mean the end of much more than human discovery. It could mean the end of all necessary work. Already in 1920, the Czech playwright Karel Capek asked what a world like that would mean for the values in human life. In the first act of R.U.R.—the play which introduced the modern use of the word “robot”—Capek has Henry Domin, the manager of Rossum’s Universal Robots (the R.U.R. of the title), offer his corporation’s utopian pitch. “In ten years”, he says, their robots will “produce so much corn, so much cloth, so much everything” that “There will be no poverty.” “Everybody will be free from worry and liberated from the degradation of labor.” The company’s engineer, Alquist, isn’t convinced. Alquist (who, incidentally, ten years later, will be the only human living, when the robots have killed the rest) retorts that “There was something good in service and something great in humility”, “some kind of virtue in toil and weariness”.

Service—work that meets others’ significant needs and wants— is, unlike discovery, clearly good in and of itself. However we work— as nurses, doctors, teachers, therapists, ministers, lawyers, bankers, or, really, anything at all—working to meet others’ needs makes our own lives go well. But, as Capek saw, all such work could disappear. In a “post-instrumental” world, where people are comparatively useless and the bots meet all our important needs, there would be no needed work for us to do, no suffering to eliminate, no diseases to cure. Could the end of such work be a better reason for dread?

The hardline pessimists say that it is. They say that the end all needed work would not only be a loss of some value to humanity, as everyone should agree. For them it would be a loss to humanity on balance, an overall loss, that couldn’t be compensated in another way.

I feel a lot of pull to this pessimistic thought. But once again, I’ve come to think it’s wrong. For one thing, pessimists often overlook just how bad most work actually is. In May 2021, Luo Huazhang, a 31 year-old ex-factory worker in Sichuan wrote a viral post, entitled “Lying Flat is Justice”. Luo had searched at length for a job that, unlike his factory job, would allow him time for himself, but he couldn’t find one. So he quit, biked to Tibet and back, and commenced his lifestyle of lying flat, doing what he pleased, reading philosophy, contemplating the world. The idea struck a chord with overworked young Chinese, who, it emerged, did not find “something great” in their “humility”. The movement inspired memes, selfies flat on one’s back, and even an anthem.

That same year, as the Great Resignation in the United States took off, the subreddit r/antiwork played to similar discontent. Started in 2013, under the motto “Unemployment for all, not only the rich!”, the forum went viral in 2021, starting with a screenshot of a quitting worker’s texts to his supervisor (“No thanks. Have a good life”), and culminating in labor-actions, first supporting striking workers at Kelloggs by spamming their job application site, and then attempting to support a similar strike at McDonald’s. It wasn’t just young Chinese who hated their jobs.

In Automation and Utopia: Human Flourishing in a World without Work, the Irish lawyer and philosopher John Danaher imagines an antiwork techno-utopia, with plenty of room for lying flat. As Danaher puts it: “Work is bad for most people most of the time.”“We should do what we can to hasten the obsolescence of humans in the arena of work.”

The young Karl Marx would have seen both Domin’s and Danaher’s utopias as a catastrophe for human life. In his notebooks from 1844, Marx describes an ornate and almost epic process, where, by meeting the needs of others through production, we come to recognize the other in ourselves, and through that recognition, come at last to self-consciousness, the full actualization of our human nature. The end of needed work, for the Marx of these notes, would be the impossibility of fully realizing our nature, the end, in a way, of humanity itself.

But such pessimistic lamentations have come to seem to me no more than misplaced machismo. Sure, Marx’s and my culture, the ethos of our post-industrial professional class, might make us regret a world without work. But we shouldn’t confuse the way two philosophers were brought up with the fundamental values of human life. What stranger narcissism could there be than bemoaning the end of others’ suffering, disease, and need, just because it deprives you of the chance to be a hero?

The first summer after the release of ChatGPT—the first summer of my fits of dread—I stayed with my in-laws in Val Camonica, a valley in the Italian alps. The houses in their village, Sellero, are empty and getting emptier; the people on the streets are old and getting older. The kids that are left—my wife’s elementary school class had, even then, a full complement of four—often leave for better lives. But my in-laws are connected to this place, to the houses and streets where they grew up. They see the changes too, of course. On the mountains above, the Adamello, Italy’s largest glacier, is retreating faster every year. But while the shows on Netflix change, the same mushrooms appear in the summer, and the same chestnuts are collected in the fall.

Walking in the mountains of Val Camonica that summer, I tried to find parallels for my sense of impending loss. I thought about William Shanks, a British mathematician who calculated π to 707 digits by hand in 1873 (he made a mistake at 527; almost 200 digits were wrong). He later spent years of his life, literally years, on a table of the reciprocals of the primes up to one-hundred and ten thousand, calculating in the morning by hand, and checking it over in the afternoon. That was his life’s work. Just sixty years after his death, though, already in the 1940s, the table on which his precious mornings were spent, the few mornings he had on this earth, could be made by a machine in a day.

I feel sad thinking about Shanks, but I don’t feel grief for the loss of calculation by hand. The invention of the typewriter, and the death of handwritten notes seemed closer to the loss I imagined we might feel. Handwriting was once a part of your style, a part of who you were. With its decline some artistry, a deep and personal form of expression, may be lost. When the bots help with everything we write, couldn’t we too lose our style and voice?

But more than anything I thought of what I saw around me: the slow death of the dialects of Val Camonica and the culture they express. Chestnuts were at one time so important for nutrition here, that in the village of Paspardo, a street lined with chestnut trees is called “bread street” (“Via del Pane”). The hyper-local dialects of the valley, outgrowths sometimes of a single family’s inside jokes, have words for all the phases of the chestnut. There’s a porridge made from chestnut flour that, in Sellero goes by ‘skelt’, but is ‘pult’ in Paspardo, a cousin of ‘migole’ in Malonno, just a few villages away. Boiled, chestnuts are tetighe; dried on a grat, biline or bascocc, which, seasoned and boiled become broalade. The dialects don’t just record what people eat and ate; they recall how they lived, what they saw, and where they went. Behind Sellero, every hundred-yard stretch of the walk up to the cabins where the cows were taken to graze in summer, has its own name. Aiva Codaola. Quarsanac. Coran. Spi. Ruc.

But the young people don’t speak the dialect anymore. They go up to the cabins by car, too fast to name the places along the way. They can’t remember a time when the cows were taken up to graze. Some even buy chestnuts in the store.

Grief, you don’t need me to tell you, is a complicated beast. You can grieve for something even when you know that, on balance, it’s good that it’s gone. The death of these dialects, of the stories told on summer nights in the mountains with the cows, is a loss reasonably grieved. But you don’t hear the kids wishing more people would be forced to stay or speak this funny-sounding tongue. You don’t even hear the old folks wishing they could go back fifty years—in those days it wasn’t so easy to be sure of a meal. For many, it’s better this way, not the best it could be, but still better, even as they grieve what they stand to lose and what they’ve already lost.

The grief I feel, imagining a world without needed work, seems closest to this kind of loss. A future without work could be much better than ours, overall. But, living in that world, or watching as our old ways passed away, we might still reasonably grieve the loss of the work that once was part of who we were.

In the last chapter of Edith Wharton’s Age of Innocence, Newland Archer contemplates a world that has changed dramatically since, thirty years earlier, before these new fangled telephones and five-day trans-Atlantic ships, he renounced the love of his life. Awaiting a meeting that his free-minded son Dallas has organized with Ellen Olenska, the woman Newland once loved, he wonders whether his son, and this whole new age, can really love the way he did and does. How could their hearts beat like his, when they’re always so sure of getting what they want?

There have always been things to grieve about getting old. But modern technology has given us new ways of coming to be out of date. A generation born in 1910 did their laundry in Sellero’s public fountains. They watched their grandkids grow up with washing machines at home. As kids, my in-laws worked with their families to dry the hay by hand. They now know, abstractly, that it can all be done by machine. Alongside newfound health and ease, these changes brought, as well, a mix of bitterness and grief: grief for the loss of gossip at the fountains or picnics while bringing in the hay; and also bitterness, because the kids these days just have no idea how easy they have it now.

As I look forward to the glories that, if the world doesn’t end, my grandkids might enjoy, I too feel prospective bitterness and prospective grief. There’s grief, in advance, for what we now have that they’ll have lost: the formal manners of my grandparents they’ll never know, the cars they’ll never learn to drive, and the glaciers that will be long gone before they’re born. But I also feel bitter about what we’ve been through that they won’t have to endure: small things like folding the laundry, standing in security lines or taking out the trash, but big ones too—the diseases which will take our loved ones that they’ll know how to cure.

All this is a normal part of getting old in the modern world. But the changes we see could be much faster and grander in scale. Amodei of Anthropic speculates that a century of technological change could be compressed into the next decade, or less. Perhaps it’s just hype, but—what if it’s not? It’s one thing for a person to adjust, over a full life, to the washing machine, the dishwasher, the air-conditioner, one by one. It’s another, in five years, to experience the progress of a century. Will I see a day when childbirth is a thing of the past? What about sleep? Will our ‘descendants’ have bodies at all?

And this round of automation could also lead to unemployment unlike any our grandparents saw. Worse, those of us working now might be especially vulnerable to this loss. Our culture, or anyway mine—professional America of the early 21st century—has apotheosized work, turning it into a central part of who we are. Where others have a sense of place—their particular mountains and trees—we’ve come to locate ourselves with professional attainment, with particular degrees and jobs. For us, ‘workists’ that so many of us have become, technological displacement wouldn’t just be the loss of our jobs. It would be the loss of a central way we have of making sense of our lives.

None of this will be a problem for the new generation, for our kids. They’ll know how to live in a world that could be—if things go well—far better overall. But I don’t know if I’d be able to adapt. Intellectual argument, however strong, is weak against the habits of years. I fear they’d look at me, stuck in my old ways, with the same uncomprehending look that Dallas Archer gives his dad, when Newland announces that he won’t go see Ellen Olenska, the love of his life, after all. “Say”, as Newland tries to explain to his dumbfounded son, “that I’m old fashioned, that’s enough.”

And yet, the core of my dread is not about aging out of work before my time. I feel closest to Douglas Hofstadter, the author of Gödel, Escher, Bach. His dread, like mine, isn’t only about the loss of work today, or the possibility that we’ll be killed off by the bots. He fears that even a gentle superintelligence will be “as incomprehensible to us as we are to cockroaches.”

Today, I feel part of our grand human projects—the advancement of knowledge, the creation of art, the effort to make the world a better place. I’m not in any way a star player on the team. My own work is off in a little backwater of human thought. And I can’t understand all the details of the big moves by the real stars. But even so, I understand enough of our collective work to feel, in some small way, part of our joint effort. All that will change. If I were to be transported to the brilliant future of the bots, I wouldn’t understand them or their work enough to feel part of the grand projects of their day. Their work would have become, to me, as alien as ours is to a roach.

But I’m still persuaded that the hardline pessimists are wrong. Work is far from the most important value in our lives. A post-instrumental world could be full of much more important goods— from rich love of family and friends, to new undreamt of works of art—which would more than compensate the loss of value from the loss of our work.

Of course, even the values that do persist may be transformed in almost unrecognizable ways. In Deep Utopia: Life and Meaning in a Solved World, the futurist and philosopher Nick Bostrom imagines how things might look. In one of the most memorable sections of the book—right up there with an epistolary novella about the exploits of Pignolius the pig (no joke!)—Bostrom says that even child-rearing may be something that we, if we love our children, would come to forego. In a truly post-instrumental world, a robot intelligence could do better for your child, not only in teaching the child to read, but also in showing unbreakable patience and care. If you’ll snap at your kid, when the robot would not, it would only be selfishness for you to get in the way.

It’s a hard question whether Bostrom is right. At least some of the work of care isn’t like eliminating suffering or ending mortal disease. The needs or wants are small-scale stuff, and the value we get from helping each other might well outweigh the fact that we’d do it worse than a robot could.

But even supposing Bostrom is right about his version of things, and we wouldn’t express our love by changing diapers, we could still love each other. And together with our loved ones and friends, we’d have great wonders to enjoy. Wharton has Newland Archer wonder at five-day transatlantic ships. But what about five day journeys to Mars? These days, it’s a big deal if you see the view from Everest with your own eyes. But Olympus Mons on Mars is more than twice as tall.

And it’s not just geographical tourism that could have a far expanded range. There’d be new journeys of the spirit as well. No humans would be among the great writers or sculptors of the day, but the fabulous works of art a superintelligence could make could help to fill our lives. Really, for almost any aesthetic value you now enjoy—sentimental or austere, minute or magnificent, meaningful or jocular—the bots would do it much better than we have ever done.

Humans could still have meaningful projects, too. In 1976, about a decade before any of Altman, Amodei or even I were born, the Canadian philosopher Bernhard Suits argued that “voluntary attempts to overcome unnecessary obstacles” could give people a sense of purpose in a post-instrumental world. Suits calls these “games”, but the name is misleading; I prefer “artificial projects”. The projects include things we would call games like chess, checkers and bridge, but also things we wouldn’t think of as games at all, like Amundsen’s and Scott’s exploits to the Pole. Whatever we call them, Suits—who’s followed here explicitly by Danaher, the antiwork utopian and, implicitly, by Altman and Amodei—is surely right: even as things are now, we get a lot of value from projects we choose, whether or not they meet a need. We learn to play a piece on the piano, train to run a marathon, or even fly to Antartica to “ski the last degree” to the Pole. Why couldn’t projects like these become the backbone of purpose in our lives?

And we could have one real purpose, beyond the artificial ones, as well. There is at least one job that no machine can take away: the work of self-fashioning, the task of becoming and being ourselves. There’s an aesthetic accomplishment in creating your character, an artistry of choice and chance in making yourself who you are. This personal style includes not just wardrobe or tattoos, not just your choice of silverware or car, but your whole way of being, your brand of patience, modesty, humor, rage, hobbies and tastes. Creating this work of art could give some of us something more to live for.

Would a world like that leave any space for human intellectual achievement, the stuff of my childhood dreams? The Buddhist Pali Canon says that “All conditioned things are impermanent—when one sees this with wisdom, one turns away from suffering.” Apparently, in this text, the intellectual achievement of understanding gives us a path out of suffering. To arrive at this goal, you don’t have to be the first to plant your flag on what you’ve understood; you just have to get there.

A secular version of this idea might hold, more simply, that some knowledge or understanding is good in itself. Maybe understanding the mechanics of penicillin matters mainly because of what it enabled Fleming and others to do. But understanding truths about the nature of our existence, or even mathematics, could be different. That sort of understanding plausibly is good in its own right, even if someone or something has gotten there first.

Venkatesh the Fields Medalist seems to suggest something like this for the future of math. Perhaps we’ll change our understanding of the discipline, so that it’s not about getting the answers, but instead about human understanding, the artistry of it perhaps, or the miracle of the special kind of certainty that proof provides.

Philosophy, my subject, might seem an even more promising place for this idea. For some, philosophy is a “way of life”. The aim isn’t necessarily an answer, but constant self-examination for its own sake. If that’s the point, then in the new world of lying flat, there could be a lot of philosophy to do.

I don’t myself accept this way of seeing things. For me, philosophy aims at the truth as much as physics does. But I of course agree that there are some truths that it’s good for us to understand, whether or not we get there first. And there could be other parts of philosophy that survive for us, as well. We need to weigh the arguments for ourselves, and make up our own minds, even if the work of finding new arguments comes to belong to a machine.

I’m willing to believe, and even hope that future people will pursue knowledge and understanding in this way. But I don’t find, here, much consolation for my personal grief. I was trained to produce knowledge, not merely to acquire it. In the hours when I’m not teaching or preparing to teach, my job is to discover the truth. The values I imbibed—and I told you I was an obedient kid—held that the prize goes for priority.

Thinking of this world where all we learn is what the bots have discovered first, I feel sympathy with Lee Sedol, the champion Go player who retired after his defeat by Google’s AlphaZero in 2016. For him, losing to AI “in a sense, meant my entire world was collapsing”. “Even if I become the number one, there is an entity that cannot be defeated.” Right or wrong, I would feel the same about my work, in a world with an automated philosophical champ.

But Sedol and I are likely just out of date models, with values that a future culture will rightly revise. It’s been more than twenty years since Garry Kasparov lost to IBM’s Deep Blue, but chess has never been more popular. And this doesn’t seem some new-fangled twist of the internet age. I know of no human who quit the high-jump after the invention of mechanical flight. The Greeks sprinted in their Olympics, though they had, long before, domesticated the horse. Maybe we too will come to value the sport of understanding with our own brains.

Frankenstein, Mary Shelley’s 1818 classic of the creations-kill-creator genre, begins with an expedition to the North Pole. Robert Walton hopes to put himself in the annals of science and claim the Pole for England, when he comes upon Victor Frankenstein, floating in the Arctic Sea. It’s only once Frankenstein warms up, that we get into the story everyone knows. Victor hopes he can persuade Walton to turn around, by describing how his own quest for knowledge and glory went south.

Frankenstein doesn’t offer Walton an alternative way of life, a guide for living without grand goals. And I doubt Walton would have been any more personally consoled by the glories of a post-instrumental future than I am. I ended up a philosopher, but I was raised by parents who, maybe like yours, hoped for doctors or lawyers. They saw our purpose in answering real needs, in, as they’d say, contributing to society. Lives devoted to families and friends, fantastic art and games could fill a wondrous future, a world far better than it has ever been. But those aren’t lives that Walton or I, or our parents for that matter, would know how to be proud of. It’s just not the way we were brought up.

For the moment, of course, we’re not exactly short on things to do. The world is full of grisly suffering, sickness, starvation, violence, and need. Frankenstein is often remembered with the moral that thirst for knowledge brings ruination, that scientific curiosity killed the cat. But Victor Frankenstein makes a lot of mistakes other than making his monster. His revulsion at his creation persistently prevents him, almost inexplicably, from feeling the love or just plain empathy that any father should. On top of all we have to do to help each other, we have a lot of work to do, in engineering as much as empathy, if we hope to avoid Frankenstein’s fate.

But even with these tasks before us, my fits of dread are here to stay. I know that the post-instrumental world could be a much better place. But its coming means the death of my culture, the end of my way of life. My fear and grief about this loss won’t disappear because of some choice consolatory words. But I know how to relish the twilight too. I feel lucky to live in a time where people have something to do, and the exploits around me seem more poignant, and more beautiful, in the dusk. We may be some of the last to enjoy this brief spell, before all exploration, all discovery, is done by fully automated sleds.

Follow

Follow