Australian educators are using my $5,000 plagiarism settlement to sell schoolkids (on science)

December 13th, 2007Two months ago, you might remember, the gods of humor and blogging saw fit to bestow on me an unexpected gift:

Model 1: But if quantum mechanics isn’t physics in the usual sense — if it’s not about matter, or energy, or waves — then what is it about?

Model 2: Well, from my perspective, it’s about information, probabilities, and observables, and how they relate to each other.

Model 1: That’s interesting!

“For almost the first time in my life,” I wrote then, “I’m at a loss for words … Help me, readers. Should I be flattered? Should I be calling a lawyer?”

Almost three hundred comments later, your answer was clear. Half of you thought I’d be a stereotypical American jerk, epitomizing everything wrong with modern society, if I sought any redress for the blatant plagiarism of my quantum mechanics lecture. The other half thought I’d be a naïve moron if I didn’t seek redress. However, there did seem to be a rough consensus on two topics: first, that as part of any settlement I should “date the models” (at least a hundred people made some joke to that effect, each one undoubtedly thinking it highly creative); and second, that it was probably okay to try to get something from either Ricoh (the printer company) or Love Communications (the ad agency), as long as the proceeds went to charity and I didn’t directly benefit. Since, like any public figure, I now make all decisions by polling my base, I finally had a warrant for action.

After talking things over informally with Warren Smith — the guy who discovered the Ricoh ad in the first place, who just happens (in one of the many ironies of this case) to be studying Australian intellectual property law, and to whom I’m deeply indebted — I next contacted a lawyer from a well-known Australian law firm. Talking to her confirmed my suspicion that many of the armchair legal theories offered in my comments section were simply mistaken. In Australian copyright law, as in American law, you can’t just take someone’s words and use them for commercial purposes without permission or attribution, regardless of any subjective judgments about the “uniqueness” or “specialness” of those words. I was on strong legal ground. But there was also a key difference between the Australian and American legal systems: Australian lawyers are prohibited from taking cases on a contingency basis. If I wanted the law firm to pursue the case, then I would have to pay them up-front.

Disregarding the pleas of my relatives — who at this point were begging me to sue — I instead wrote to Love Communications directly, proposing a settlement to be donated (for example) to a mutually-agreed-upon Australian science outreach organization. We eventually agreed to a settlement of AUS$5,000. Considering the value of Love Communications’ Ricoh account — which the Sydney Morning Herald reported as more than AUS$1,000,000 — I thought the ad agency was getting off incredibly easily, but at least I wouldn’t have to write any more letters or deal with lawyers.

The one remaining problem was to find a suitable Australian science outreach organization to which to donate the $5,000, and that would also be amusing to blog about. As I chewed on this problem, my mind wandered back to my visit to Brisbane in December 2005, and to a conversation I’d had there with Jennifer Dodd — then of the University of Queensland and now of the Perimeter Institute in Waterloo. In that conversation, Jen had told me about a popular-science lecture series in Brisbane she’d founded, called BrisScience. I’d immediately asked her to repeat the name.

“BrisScience,” she said.

“Spell it?” I asked.

“B-r-i-s-Science. Why, is there something funny about the name?”

“No, no, it shouldn’t be a big deal in Australia.”

Aha! I now emailed Jen to ask whether BrisScience had continued its “cutting-edge” outreach programs in her absence. Jen replied that yes, it had, and she put me in touch with Joel Gilmore, BrisScience’s current director. Joel told me that not only was BrisScience still going strong (with the next lecture, by Bill Phillips, being about quantum mechanics), but that he (Joel) also directed another science outreach program called the Physics Demo Troupe, which does hands-on science shows for schoolkids in Brisbane and rural areas. Joel proposed that we donate $2,000 of the settlement to BrisScience and $3,000 to the Physics Demo Troupe, the latter supporting a visit to the Torres Strait Islands in North Queensland. I agreed, and Love Communications agreed as well.

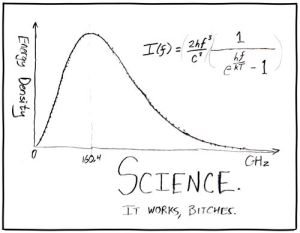

I am, of course, gratified that this sordid southern-hemisphere tale of sex, plagiarism, quantum mechanics, and printers could be resolved to everyone’s satisfaction, without the need for a courtroom battle, and that schoolkids in Torres Strait Island might even learn some physics as a result. But is there one sentence with which to conclude this saga, one sublimely fatuous thought that sums up my feelings toward the entire affair? Wait for it… wait for it…

That’s interesting.

Follow

Follow