NAND now for something completely different

There was a real breakthrough in quantum algorithms last week — though you wouldn’t have known about it from reading Slashdot, Yahoo News, The Economist, or (for that matter) this blog.

Farhi, Goldstone, and Gutmann — the feared MIT trio — announced a quantum algorithm for evaluating NAND trees in O(√N) time. This solves a problem that I worked on as an undergrad nine years ago (!), and that many a tyro had unsuccessfully tackled since.

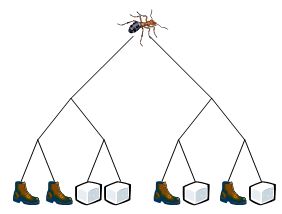

Alright, so suppose we’ve got this ant at the root of a complete binary tree:

You and your friend take turns moving the ant: first you can move it either down-and-left or down-and-right, then your friend can make the same choice, then you, etc. If the ant ends up at a sugar cube, you win the game; if it ends up at a boot, your friend wins. (Your friend is an exterminator.)

In the above example, it’s not hard to see that you’re the one with a winning strategy. But more generally, we can imagine a tree d levels deep, with an arbitrary sequence of N=2d boots and sugar cubes at the leaf vertices. Then the question is: how many of the leaf vertices do you have to examine, in order to decide whether you or your friend has the win?

The goal here is to model games of alternation like chess and go, abstracting away the details. The boots and sugar cubes correspond to losing and winning board positions. Then we want to know: how many board positions would a computer have to evaluate, in order to play the game perfectly?

It’s clear that you generally don’t have to examine all the positions. For example, suppose that at some position where it’s your turn to move, you discover a move that always lets you win. Then you don’t care what happens if you make any other move from that position.

Based on this idea (which AI types call alpha-beta pruning), in 1986 Saks and Wigderson gave a randomized algorithm to find an optimal move in the ant game, after examining (on average) only N0.753 of the N leaf vertices. (Here 0.753 ≈ log2(1+√33)-2.) On the other hand, they also showed that this running time was optimal for randomized algorithms with no error. Then, in 1995, Santha showed that it was optimal even for randomized algorithms with error.

Alright, but what about the quantum case? It was observed early on (by a simple reduction from the PARITY problem) that any quantum algorithm for playing the ant game would have to examine at least √N of the N leaf vertices. But was a √N running time achievable? Until last week, we knew of no quantum algorithm that did even slightly better than the classical bound of N0.753.

And now it’s time to eat some crow: I didn’t believe there was such a quantum algorithm. I thought N0.753 was optimal. In my defense, though, this was never really a very serious belief, in contrast to (say) my belief that quantum computers can’t solve NP-complete problems in polynomial time. Really I only claimed N0.753 was optimal to try and goad people into proving me wrong. And today, I’m pleased to report that my strategy was successful.

Last Wednesday, Farhi, Goldstone, and Gutmann put out a preprint showing how to find an optimal move for the ant game in time O(√(N log N)). However, their algorithm only worked in the “Hamiltonian oracle model,” a fanciful idealization preferred by physicists in which time is (get this) continuous rather than discrete. Two days later, Childs, Cleve, Jordan, and Yeung showed how to port the algorithm to the ordinary discrete model, except that there the running time goes up to N1/2+ε for any ε>0. Then, just yesterday, Farhi, Goldstone, and Gutmann improved the running time in the Hamiltonian oracle model to the optimal O(√N). One hopes and expects that further improvements in the discrete model are forthcoming.

Another obvious question is whether any game tree can be evaluated in O(√N) time, not just the complete binary tree used in the ant game. Since the complete binary tree was previously considered the “hardest” case, the natural conjecture would be yes.

Years ago, David Deutsch gave an interview in which he illustrated Grover’s algorithm using chess. I emailed Deutsch to point out that this was a bad example: at the time, we we had no idea how to get a Grover speedup for games of alternation with small branching factor. Deutsch dutifully posted a correction. Now I guess I’ll have to email him again, to tell him one can get a “Grover” speedup for games like chess after all.

I put “Grover” in quotes because, even though the Farhi-Goldstone-Gutmann algorithm achieves a square-root speedup, it doesn’t actually look anything like Grover’s algorithm. Instead it’s based on a quantum walk (reminiscent of this paper), which is analyzed using tools from scattering theory. Apparently, physics occasionally does come in handy for quantum computing.

All in all, this business with NAND trees has only confirmed my core belief about theoretical computer science: that there are no miracles, except when there are.

Follow

Follow

Comment #1 February 23rd, 2007 at 7:01 am

Scott,

In the previous thread you said that if a fast quantum algorithm for NP-complete problems existed, then just like a fast classical algorithm, it would have to be “. . . radically different from anything that’s yet been imagined.”

And here you say that even though the Farhi-Goldstone-Gutmann algorithm achieves a square-root speedup, it doesn’t actually “. . . look anything like Grover’s algorithm.”

Viewing these two statements together gives, I believe, at least some reason for subdued optimism that clever ways (perhaps completely contrary to the intuition — as Peter Shor says) will be found to solve problems like finding a a fast quantum algorithm for NP-complete problems.

Comment #2 February 23rd, 2007 at 7:25 am

Michael, the difference is “merely” a quantitative one.

NAND trees in √N: One of the biggest surprises of the year

NP ⊆ BQP: Would be the biggest surprise of my life

NAND trees in √N: Can get a Grover speedup for chess

NP ⊆ BQP: Could become God

NAND trees in √N: Eating crow (figuratively)

NP ⊆ BQP: Would eat an actual crow (bones, feathers, and all)

Comment #3 February 23rd, 2007 at 7:36 am

Scott,

I guess in the end, I’m just an optimist — and I don’t make any claim to have a lot of good reasons for optimism, other than simply a matter of taste. I imagine that if prepared appropriately, even crow can be delicious.

Comment #4 February 23rd, 2007 at 7:45 am

In some twisted sense, I’m also an optimist: I believe we’ll succeed in proving things impossible.

Comment #5 February 23rd, 2007 at 8:09 am

Scott,

Your post of 7:25 am makes me a little sad that I will probably not read anything comparably funny for the rest of the day.

Comment #6 February 23rd, 2007 at 8:31 am

would you eat the crow if someone proves that NP ⊆ BQP but as well a quantum lower bound for 3Sat of say n^1000.

Comment #7 February 23rd, 2007 at 9:32 am

Nice, log2(1+√33)-2 versus a clean, crisp 1/2.

Yet another “argument by elegance” showing that quantum mechanics gives the true theory of computation, while classical computation is just an ugly approximation.

Comment #8 February 23rd, 2007 at 9:51 am

would you eat the crow if someone proves that NP ⊆ BQP but as well a quantum lower bound for 3Sat of say n^1000.

Sure. Maybe a smaller crow.

Comment #9 February 23rd, 2007 at 10:10 am

Wim van Dam remarks (with a light grammatic clean-up): “Quantum mechanics gives the true theory of computation, to which classical computation is just an approximation.”

The text might continue …

Corollary I: “Analytic extension gives the true theory of functional analysis, to which real-valued analysis is just an approximation.”

Corollary II: “Quantum model order reduction gives the true theory of system simulation, to which classical model order reduction is just an approximation.”

Remark: In all three instances, mathematical power comes from intoducing invariances by “complexifying” a real-valued formalism, then exploiting these invariances.

Comment #10 February 23rd, 2007 at 1:57 pm

Now I guess I’ll have to email [Deutsch] again, to tell him one can get a “Grover” speedup for games like chess after all.

I put “Grover” in quotes because, even though the Farhi-Goldstone-Gutmann algorithm achieves a square-root speedup …

Yes, though technically the speedup is N2/3 (actually N0.6633…), when you compare quantum with classical.

Comment #11 February 23rd, 2007 at 1:59 pm

My superscripts didn’t come out: I meant N^2/3 and N^0.6633…

Comment #12 February 23rd, 2007 at 2:29 pm

I don’t think I’ll ever be able to think about alternation again without that picture flashing in my head.

Comment #13 February 23rd, 2007 at 2:44 pm

But more generally, we can imagine a tree d levels deep, with an arbitrary sequence of N=2^d boots and sugar cubes at the leaf vertices.

I take it that “arbitrary” means adversarial, rather than random?

By the way, Deutsch may still have a leg to stand on in the specific case of chess, because you get a classical acceleration not just from alpha-beta pruning, but also from hashing positions.

Comment #14 February 23rd, 2007 at 5:21 pm

Greg Kuperberg Says: I take it that “arbitrary” means adversarial, rather than random?

Just to reflect, in real-life games like chess, the sugar-cube distribution is invariably neither random nor adversarial, but rather is favorably distributed for heuristic evaluation (rather as though the sugar cubes liked to “clump” together).

For example, the invincible (to us mere mortal humans) chess-program Rybka is widely believed to exploit this structure to achieve search efficiency that is super-alpha/beta (to try your hand at equalling Rybka’s 3000+ Elo level of chess play, click here).

Interestingly, the search algorithm used by Rybka has never been disclosed … every other chess-engine designer is presently trying to figure it out!

In contrast to chess, games like go, which have a high branching ratio and less-obvious “clumping of the sugar cubes”, are presently immune to master-level algorithmic play. However it is that humans play go, it seems to be mighty tough to capture in an explicit algorithm.

My understanding of backgammon is that machines presently beat humans, by using neural nets trained in thousands of randomly-generated games, rather than human-designed algorithms. Perhaps someone can confirm this?

That all three games have a non-adversarial structure is no doubt essential to their enduring human interest. E.g., games like “factor the enormous integer” or “assemble the huge blank jigsaw of small rectangular pieces” can only be solved by a quantum computer precisely because of their lack of humanly (or algorithmically) exploitable structure.

Comment #15 February 23rd, 2007 at 5:28 pm

O(sqrt(N)*poly(d)) algorithm for depth-d formulas

We (Andrew Childs, Ben Reichardt, Robert Spalek, and Shengyu Zhang) have found an O(sqrt(N)*poly(d)) algorithm for general depth-d formulas (not necessarily balanced or binary). Our algorithm is inspired by the Farhi/Goldstone/Gutmann algorithm. However, it uses quantum phase estimation instead of scattering theory and continuous-time quantum walks. Our writeup will hopefully by ready for the arXiv within the new few days.

Comment #16 February 23rd, 2007 at 5:36 pm

Robert: Thanks for the announcement!

Comment #17 February 24th, 2007 at 1:15 am

Sorry, I can’t resist. In terms of crows, what would you do if quantum computers turned out to be infeasible?

Comment #18 February 24th, 2007 at 1:31 am

If a fundamental reason is discovered why quantum computing is impossible, I’ll eat some crow meat, but no bones or feathers.

See, I’ve never had any emotional commitment to quantum computing being possible. For me, the key point is that either quantum computing is possible, or quantum mechanics (at least as physicists have understood it for 80 years) is wrong. And while I’m betting on the former possibility, I’m hoping for the latter, since it would be much more scientifically interesting.

Comment #19 February 24th, 2007 at 4:45 am

Scott sez Either quantum computing is possible, or quantum mechanics (at least as physicists have understood it for 80 years) is wrong. … or quantum error correction is practically infeasible.

Because, don’t we have to be wary of a non-excluded middle here?

There is a historical precedent: the belief in the 1940s and 1950s of Pauling, von Neumann, Weiner, and Feynman, the atomic-resolution biomicroscopy was achievable by electron microscopy (or other high-energy means). Basically, they argued that “Either atomic-resolution microscopy is possible, or wave mechanics (at least as physicists have understood it for 30 years) is wrong.”

Their generation was reluctant to embrace the non-excluded middle “… or else radiation damage is a show-stopper.” This falsely-excluded middle was a very convenient belief for their generation — surely so mundane a mechanism as radiation damage would not permanently obstruct such a noble scientific enterprise? Yet so it proved.

Had their generation taken the problem of radiation damage more seriously, and looked systematically for a way around it, they might have invented magnetic resonance imaging almost 50 years earlier. They had all the required physics and mathematics in-hand, as early as 1935. And so a great opportunity was overlooked for a long time.

Personally, I admire and have great respect for the formalism of quantum error correction. However, history teaches us to have equal or greater respect for the ingenuity of Mother Nature’s decoherence mechanisms!

Just as the physicists of the 1920s-1980s did not have a good grasp on the potentialities of microscopy—in part because they were blinded by a natural reluctance to embrace “the middle”—it is IMHO completely possible that we do not yet have a good grasp on the potentialities of quantum computation and simulation, and that the “middle” we are reluctant to embrace is noise.

Comment #20 February 24th, 2007 at 5:31 am

John: First, I don’t “sez” anything.

Second, precisely because of issues like the ones you mention, I was very careful to refer not to quantum mechanics, but to quantum mechanics as physicists have understood it for 80 years. If noise were enough to shift us from one theory of computation to another, to me that would change our entire picture of QM — in a way that picture decidedly isn’t changed by the impracticality of a specific technology like atomic-resolution microscopy.

Comment #21 February 24th, 2007 at 5:56 am

Scott, I do apologize for the “sez” — and by the way I’m a huge fan of your witty and erudite writing style!

With regard to the need for care in speaking, e.g., “physics as physicists have understood it for 80 years”, I am pretty confident that we mainly agree.

There may be a difference in emphasis, though. Being young, optimistic, and in a hurry, younger people tend to focus upon breakthroughs as the primary path to new understanding. Breakthrough stories are what journalists prefer too, because they’re easier to write about.

There is also, however, a slower path of change—equally important IMHO—in which the entire perspective of a community shifts, and the main emphasis is upon synthesis, especially engineering synthesis.

E.g., it would be hard to argue that magnetic resonance imaging represented a breakthrough in “new physics” or “new mathematics”. Rather the breakthrough was one of a new perspective upon imaging, and also, the engineering synthesis of many established ideas into a unified practical technology.

Obviously, both paths—breakthrough and synthesis—are essential to the health of the science and technology ecosystem. They are directly related, one to the other.

IMHO, one of the many respects in which your blog serves the community well, is as a forum where both points of view are discussed, and from which both benefit. For this, thank you very much!

Comment #22 February 24th, 2007 at 11:14 am

John Sidles wrote:

“[….] Remark: In all three instances, mathematical power comes from int[r]oducing invariances by “complexifying” a real-valued formalism, then exploiting these invariances.”

I hear this a lot, but I don’t think it makes a lot of sense. The power of quantum computing has nothing to do with the amplitudes being complex; nothing changes (substantially) if we restrict ourselves to real valued amplitudes.

Comment #23 February 24th, 2007 at 12:44 pm

The power of quantum computing has nothing to do with the amplitudes being complex; nothing changes (substantially) if we restrict ourselves to real valued amplitudes.

But it has everything to do with the fact that the amplitudes don’t have to be positive. Or even more to the point, that quantum probability violates positivity properties of classical probability. Qauntum computing affords a more powerful kind of statistical sampling than classical randomized computing. That is what I think is the heart of the matter.

Comment #24 February 24th, 2007 at 3:40 pm

Greg Kuperberg wrote: “But it has everything to do with the fact that the amplitudes don’t have to be positive.[…]”

I absolutely agree and I think that this is the most clear when you ‘stick’ to real valued amplitudes (as opposed to the more general complex valued ones). And because of that, I think that the quantum generalization of classical computing can not at all be compared (and has nothing to do) with the relation between complex and real analysis.

Comment #25 February 24th, 2007 at 4:26 pm

Wim van Dam says “… The power of quantum computing has nothing to do with the amplitudes being complex.”

What you say is true. And I also agree with Greg that it then becomes essential that real amplitudes can be negative, such that interference can still occur; this point is discussed by Feynman at some length in his 1982 article Simulating physics with computers.

But AFAICT, doing quantum computations (or more broadly, computing quantum simulations for large-scale systems) is made much easier when the state space is “complexified”.

There seem to be at least two mathematical reasons for this (and maybe people can suggest still more reasons than these two). The first is that complexification creates a powerful invariance, namely, the unitary invariance associated with the ambiguity of the unraveling of simulated trajectories. The second is that when we do model order reduction of a large-scale quantum system, the geometry of the complexified state space is a Kahler geometry — much nicer than a Riemannian geometry.

Comment #26 March 12th, 2007 at 8:22 am

Real valued quantum computing. Bah. Real quantum computing die hards perfer quantum computing based on the symplectic Lie group.